Weariness in the age of fake news

Two weeks ago, in a rare appearance out of character at an event organised by the Anti-Defamation League, British comedian and actor Sasha Baron Cohen called out Facebook founder and CEO Mark Zuckerberg and claimed, “If Facebook were around in the 1930s, it would have allowed Hitler to post 30-second ads on his ‘solution’ to the ‘Jewish problem‘.” He later tweeted, “If #MarkZuckerberg and tech CEOs allow a foreign power to interfere in our election (again) or facilitate another genocide (like Myanmar), perhaps they should be sent to jail.”

Facebook has been under fire since 2017 for their irresponsible handling of user data and for standing by and watching as a torrent of “fake news” flooded newsfeeds and distorted public perception of everything from Donald Trump’s intelligence to the shape of the earth. Post-US elections, a number of words were thrown around to describe the effect that social media has when used as a medium for news and political distribution—words like ‘echo chamber’ came and went, but nothing changed. Editorials were written, shared and nothing came of that either.

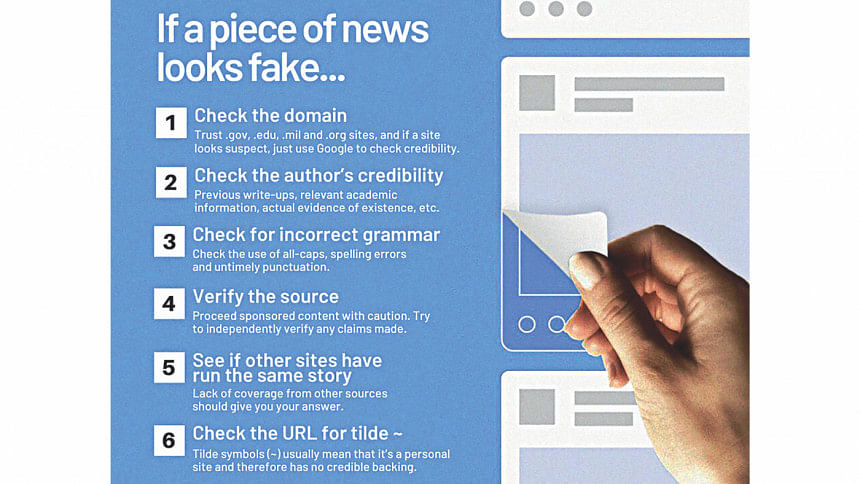

Facebook then rolled out a series of updates, which although by no means was sufficient in tackling the complex problem of fake news and politicisation of newsfeeds, gave users the option of tracking down the source of any shared item as well as screening any news link for credibility with the touch of a button. It’s interesting that Facebook considers most of its users to be willing to search online and check sources for credibility before being influenced by it —as if that option was not there already. For most users, this update simply removes the extra step users need to go through to actually using search engines to verify news, while those who are aware about fake news were likely to do it anyway before this new update.

It’s not just far-away elections and the western world that is afflicted by this disturbing trend of social media reinforcing internal political (and conspiratorial) biases. Closer to home, it seems that communal tensions are increasingly becoming closely tied with sentiments expressed by social media users—jokingly, maliciously or through entrapment. The 2019 Bhola incident, where Biplob Chandra Baidya’s Facebook profile was hacked by unknown persons and used to post defamatory comments, sparked district-wide riots that left four people dead and over a 100 injured. This bears a striking resemblance to the incident in Ramu in 2012, where communal violence laid waste to 24 Buddhist temples and hundreds of homes in one of the worst incidents of communal violence in Bangladesh in recent history, again sparked by falsely planted hate speech against Muslims.

A systematic targeting of the Rohingya community by the military junta in Myanmar’s Rakhine state was slowly unraveling to the world. In 2015, Facebook had only two Burmese speaking content moderators working for the company, tasked with overseeing a user base of nearly 7.3 million users that grew out of Myanmar’s deregulation of the telecom industry. An investigation by Reuters from August 2018 revealed that even now, Facebook only has a scant number of moderators to filter through the deluge of hate posts against the Rohingya that incite violent acts on the community. Most of these moderators are English-speaking only and based at the company’s regional office in Kuala Lumpur, Malaysia. Several attempts at linking with Myanmar-based tech companies to outsource the content moderation on Facebook has failed—in most cases they were unable to take the posts down in time before they spread, or the content did not get flagged at all.

In both Bangladesh and Myanmar’s cases, it’s increasingly clear that Facebook must be held accountable for simply not doing enough to curb the spread of fake news, hate speech and data breaches. While a lot of the responsibility falls on users to verify news links before sharing, or to report hate speech when they come across it, and to do everything in their power to ensure their data is protected, Facebook too must take up responsibility. One solution may be Facebook opening local offices in crisis areas as opposed to maintaining only a regional presence. If Facebook works closely with journalists, academics and civil society actors in understanding and correctly approaching the content moderation problem, it might pay off—but do we want a tech company with a track record of mishandling user data to operate locally, where they can take advantage of the lack of data-protection laws and be subject to local political pressures?

Requests from governments about Facebook user data has nearly tripled worldwide in the last four years. In this context, it is difficult to label a local Facebook office as being a viable solution, since surveillance, restrictions on freedom of speech and net neutrality come into question.

The stronger solution might perhaps be in understanding why unsubstantiated news and unverified claims garner so much response in the digital sphere. Historically, we see that unsubstantiated information always gains precedence over actual facts, in environments where there is little freedom of expression. All over the globe, policies that muzzle the media from uttering substantiated, verified truth have given rise to a class of citizen who would rather believe any other source of news than the self-censored reporting of mainstream media houses. At the end of the day, mainstream media yearning to survive in these spaces leads to a chain reaction that eventually spirals out of control.

Till we address these policies of hounding news media and allow them to do their job properly, the situation will grow increasingly dire—irrespective of action on the part of tech companies like Facebook, Google and Twitter. It’s not entirely a technology problem; the issue at hand is that the fundamental rights of expression of news media is being encroached upon, when the exact reverse is needed to gain back public trust.

For all latest news, follow The Daily Star's Google News channel.

For all latest news, follow The Daily Star's Google News channel.

Comments