Deepfakes demystified: An introduction to the controversial AI tool

Deepfakes have emerged as one of the most fascinating and contentious tools in the rapidly evolving landscape of artificial intelligence. These AI-powered manipulations of audio and video content hold the potential to revolutionise industries yet also pose significant challenges to personal security and societal trust. Despite deepfake technology recently rising to mainstream prominence, not all of us are aware of what it exactly is, and what kind of perils it can ensue. Join us as we delve into the captivating world of deepfakes, exploring their positive applications as well as their potential misuses.

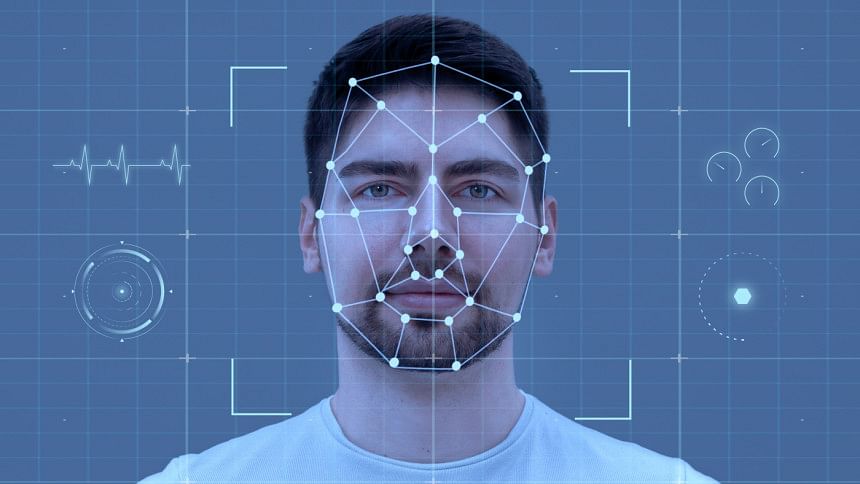

How deepfakes work

Deepfake technology leverages machine learning algorithms to create hyper-realistic digital content. By feeding an AI system with a plethora of images or audio clips, it can generate a synthetic version of a person mimicking activities that you can regulate via a photo, video, or even audio. This technology has a multitude of applications, from benign to malicious. However, the potential for deepfakes to erode trust and distort reality is a significant concern. Therefore, knowing how to protect yourself in the era of deepfakes is crucial - which we'll be getting into.

Harnessing deepfakes for good: Real-world applications

From entertainment and education to healthcare and beyond, deepfakes are being used to innovate and improve lives. Here are some real-life examples of how deepfakes are being used for the better.

Reviving historical figures

Deepfake technology has breathed new life into figures from the past, creating a unique, interactive experience for audiences.

A notable example is the Dalí Museum in St. Petersburg, Florida, which used deepfake technology to recreate the likeness of Salvador Dalí, the renowned surrealist artist. The result was an interactive video of Dalí engaging with museum visitors, creating an immersive experience that brought the artist's work to life.

In the movie 'The Irishman', deepfake technology was also used to de-age Robert De Niro, allowing him to play the same character at different stages of life. This enhances the storytelling and opens up new possibilities for casting and character development.

Honouring musical legends and voice synthesis

In the music industry, deepfakes have been used to pay tribute to iconic artists. Snoop Dogg, for instance, used deepfake technology in one of his music videos to 'resurrect' his friend and fellow rapper, Tupac, who tragically died young.

Project Revoice is a notable initiative that uses deepfake technology to recreate the voices of individuals who have lost their ability to speak due to conditions like ALS (Amyotrophic Lateral Sclerosis).

Using old recordings of the person's voice, the technology can generate a synthetic voice that sounds remarkably similar, giving them back their ability to communicate in their voice.

Enhancing education

Deepfakes are also being used to make education more engaging. Udacity, an online education platform, explores deepfakes to generate lecture videos from text-based content or audio narration, making learning more interactive.

Similarly, speech synthesis company CereProc used deepfake technology to recreate the voice of former U.S. President John F. Kennedy, allowing him to 'deliver' a speech he was scheduled to give on the day he was assassinated.

The 'Deep Nostalgia' feature from MyHeritage uses deepfake technology to animate old family photos, allowing users to see their ancestors in motion.

Improving customer engagement

In the news and fashion industries, deepfakes are being used to enhance customer engagement. Reuters has created an AI-generated news presenter to deliver personalised sports news summaries.

Japanese AI firm Data Grid allows customers to 'try on' clothes virtually by deepfaking their faces onto models. These applications improve the customer experience and open new personalisation and interactivity possibilities.

Therapeutic applications

In the field of mental health, deepfakes are being used for therapeutic purposes. For instance, researchers at the University of Southern California's Institute for Creative Technologies have developed a project called 'Digital Survivor of Sexual Assault'. It uses deepfake technology to create a virtual human named 'Ellie' who interacts with trauma survivors, providing a new form of therapy to help patients open up about their experiences.

Unmasking the potential misuses of deepfake technology

In the labyrinth of digital innovation, deepfake technology stands as a powerful yet potentially perilous tool. While its applications can revolutionise various sectors, the misuse of deepfakes can cast a long, unsettling shadow over our digital landscape.

Political manipulation

One of the most alarming misuses of deepfakes is in the realm of politics. By creating hyper-realistic videos of politicians saying or doing things they never did, malicious actors can manipulate public opinion and disrupt democratic processes. For instance, a deepfake video of former US President Obama, manipulated to make it appear like he was delivering a public service announcement, went viral, highlighting the potential for political deception.

Non-consensual intimate images

Deepfakes can also be used to create non-consensual intimate images, a form of digital abuse that can cause significant harm to individuals. By superimposing a person's face onto explicit content, perpetrators can cause emotional distress, damage reputations, and even extort victims.

Disinformation and fraud

Deepfakes can be weaponised to spread disinformation and fake news, eroding public trust and stoking social tensions. Fraudsters can use deepfakes to impersonate CEOs or other high-ranking officials, tricking employees into revealing sensitive information or making unauthorised transactions. In 2019, a UK energy firm's CEO was deepfaked in a phone call, leading to a fraudulent transfer of €220,000.

As we stand on the precipice of the deepfake era, understanding these potential misuses is crucial. It's a reminder that while we marvel at the power of deepfake technology, we must also remain vigilant, ensuring that this digital mirage doesn't distort our perception of reality.

For all latest news, follow The Daily Star's Google News channel.

For all latest news, follow The Daily Star's Google News channel.

Comments