Curbing fake news or relinquishing responsibility?

Social media giant Facebook has been under fire lately for their irresponsible handling of their users' data and for standing by and watching as a torrent of "fake news" flooded newsfeeds and distorted public perception of everything from Donald Trump's intelligence to what actually lies at the centre of the earth. Post-US elections, a number of words were thrown around to describe the effect that social media has when used as a medium for political distribution—words like "echo chamber" came and went, and nothing changed. Editorials were written, shared and nothing came off it. Just ahead of a hearing with the US Congress regarding the breach of millions of users' private data, Facebook decided to roll out an update to curb the onset of "fake news" on their social media platform.

Let's get one issue cleared out right away—the recent data breach involving Facebook and Cambridge Analytica, a data company employed by the Trump campaign with shady ties with (and apparently, funding from) Russian sources—is part of the problem, but it's more or less outside of Facebook's purview because the data was gathered without explicit consent of Facebook. While that issue should call into question Facebook's general attitude towards collecting user data and supplying it to advertisers (or researchers like Aleksandr Kogan, who supplied the psychometric profiles and private Facbeook info to Cambridge Analytica), the way the events unfolded makes it somewhat clear that Facebook initially had no idea that the data would be used to influence the outcome of something as big as an election in one of the most politically influential nations on the planet. The distinction lies there—what Cambridge Analytica did with the data they acquired was to target users more prone to sharing certain kinds of news, what the "news" sources did was to supply the kind of misinformation that swings those targeted users' opinions.

Facebook's latest update, while by no means sufficient in tackling this two layered problem, gives users the option of tracking down the source of any shared item as well as screening any news link for credibility with the touch of a button. What's interesting here is that Facebook considers most of its users to be willing to check sources for credibility—was that option not there already? For most users, this update removes the extra step of actually using search engines to verify news before being influenced by it (thus leading to re-sharing, commenting or generally thinking the news content is true), while those who were concerned about the validity of the news were likely to do it anyway before this new update. So how exactly does this new Facebook feature help in removing the plague of fake news on people's newsfeeds?

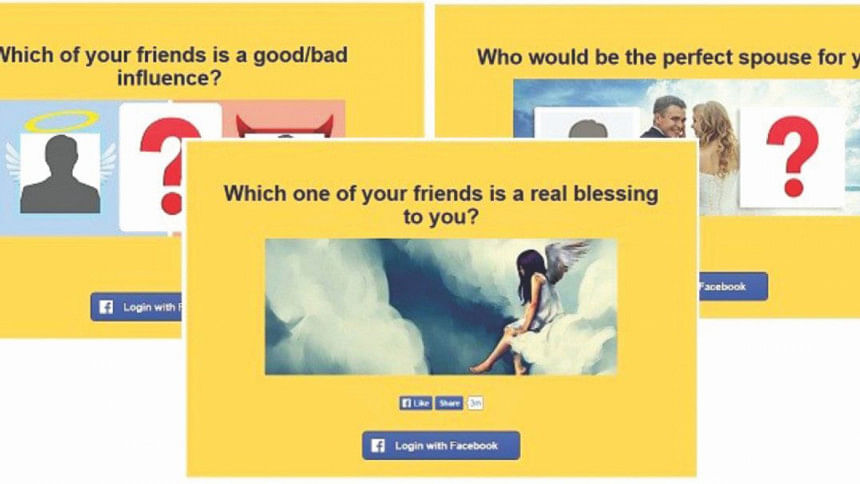

It doesn't. The solution has always been manual—an update from Facebook isn't likely to fix users' propensity for sensational, absurd news items that they eventually don't bother verifying, so the burden still lies on the users themselves. The fact that Cambridge Analytica was likely able to divide general Facebook users into columns of "likely to share fake news" and "likely to verify source and content before sharing" based on how narcissistic the users were (enough to take quizzes like "who is your celebrity lookalike?") should give us an idea of how our social media personalities are projections of ourselves. The psychometric profile that Cambridge Analytica built works and is "useful", and the one lesson that we should take away from it is to prioritise our social media usage—for socialising and networking while being smart about verifying what we share, sure, for self-gratifying tendencies that make you more prone to thinking you're right about everything, not-so-much.

The basic argument that you are inclined to share something you feel to be true based on your in-built bias (be it towards Trump instead of Hillary, dogs instead of cats, your irrational hatred of Justin Bieber), is still true. Facebook's algorithm works that way—it reaffirms your biases based on the pages you've liked, the people you follow and the posts you've shared. It'll show you ads that reaffirms your stance on what brand of cat litter people should use, who is lying on a presidential election campaign and how the moon landing was faked.

If it seems like modern social media should only be used while wearing a tin-foil hat, get that image out of your head—it really doesn't need to be that way. Only if you're bothered enough to verify whatever you see, and occasionally come up for air from whatever echo chamber you've drowned yourself in. It's okay to believe certain things, but don't go trying to reaffirm it on social media—chances are you'll get your self-gratification, but it'll mess things up on a much wider scale.

Social media has progressed the way we consume news and how quickly word spreads. It's opened up avenues for people to conduct business, expand networks and generally connect to a wider network than ever imaginable. Using it, however, requires responsibility and common sense—don't expect someone else to regulate it for you.

Shaer Reaz is In-Charge, Shift, The Daily Star.

Comments